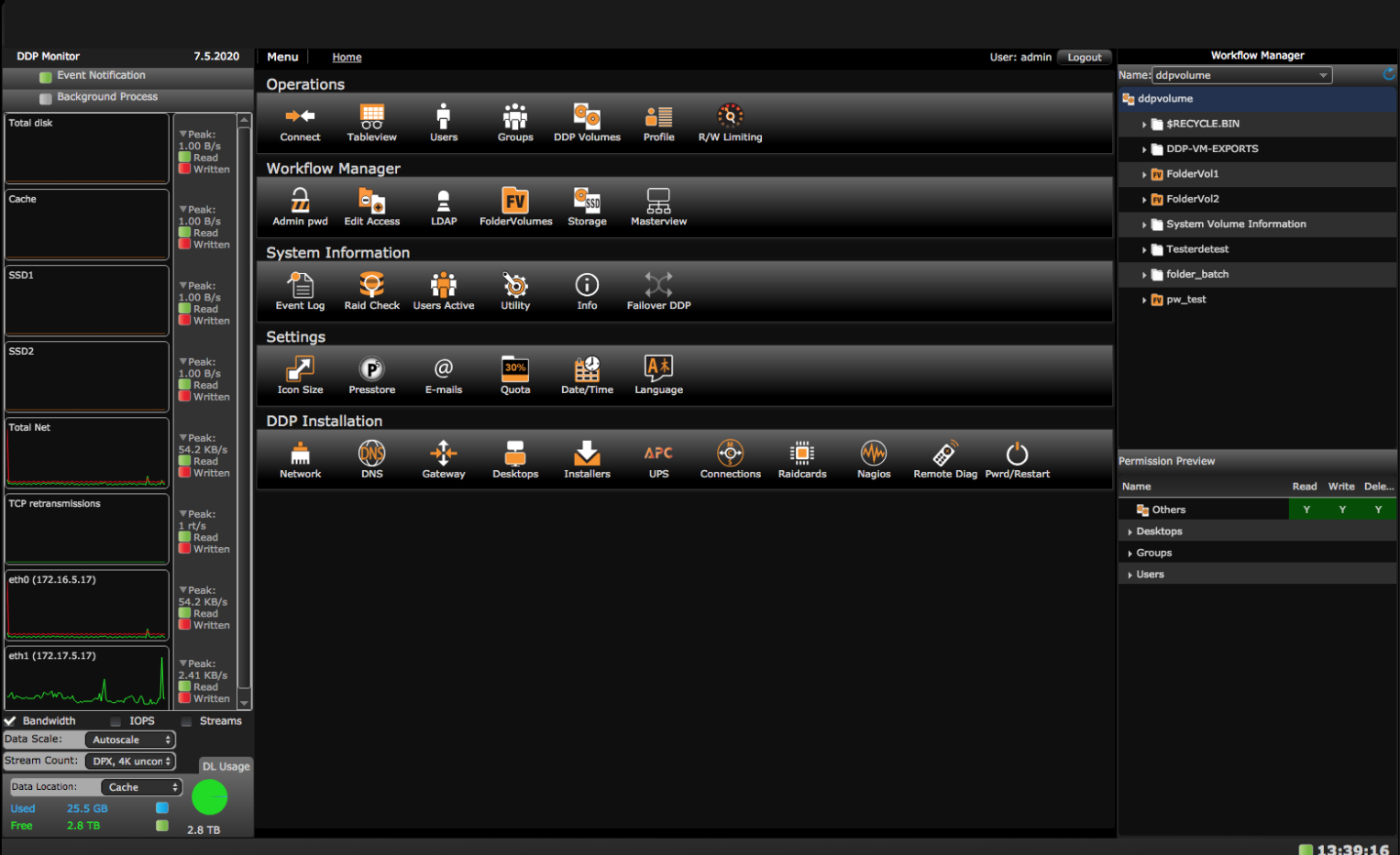

is a parallel scale out HA SAN file system

AVFS means Ardis Virtual File System.

AVFS is a from the ground up in house development and since 4 years in use in the M & E market. It comes standard with iSCSI for block IO of unstructured data and AVFS for the metadata. AVFS is based on key-value and b-tree databases. It has a small footprint and is fast. It comes as high availability setup pre-installed on a 1U dual AVFS Head with Debian version 10 as operating system. The dual AVFS Head has 2x dual 10GbE/RJ45 ports and 2x two PCIe slots for network cards. When two slots are not enough a 3U version of an AVFS Head can be delivered.

THE SETUP IS SIMPLE

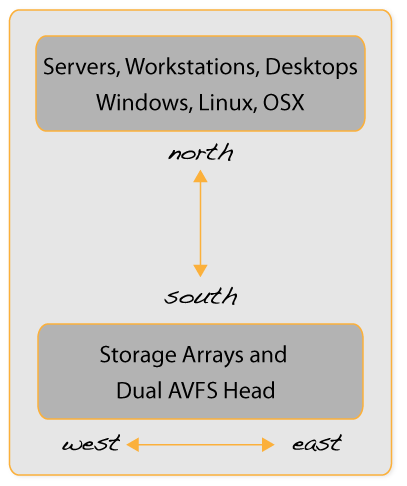

On clients (servers, workstations, desktops) the AVFS client driver is installed to connect to the infrastructure (network, dual AVFS Head and storage arrays). Clients communicate via the AVFS driver with the dual AVFS Head and via iSCSI, iSER, FC or NVME-oF with storage arrays (north-south).

iSCSI is easy because iSCSI comes pre-installed on Windows and Linux and iSCSI for Mac is delivered as part of AVFS. HPC clients requiring extreme bandwidth connect to storage arrays via iSER and or NVME-oF. There is also so called metadata and possible data communication between the storage arrays and dual AVFS Head (west-east), as the figure shows:

HOW DOES IT WORK?

LUNS created on storage arrays are permanently mounted on clients and are accessed in parallel. Directories/folders of the AVFS file system can be mounted as volumes. They are then called folder volumes. On storage arrays LUN’s are created. In AVFS LUN’s are called Data Locations (DL). Each file in a folder volume has a path to a single DL. When a file is accessed AVFS informs the client OS of the file data path for DL access. It can be any DL.

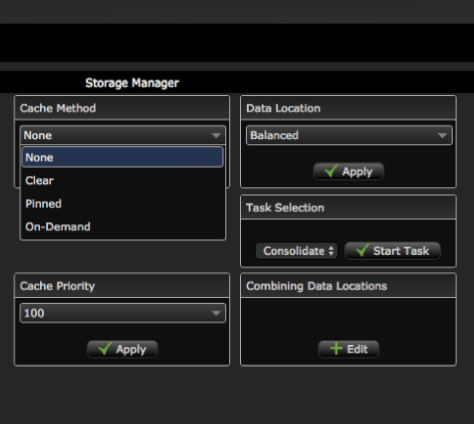

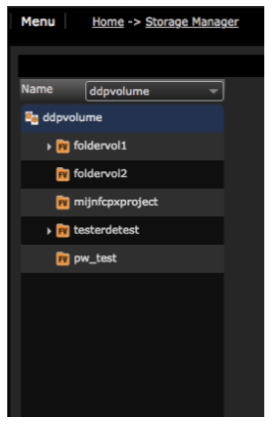

The Storage Manager page of the web interface is the page where the DL for the file data is determined.

Different files in a folder volume can have different paths for their data. When Balanced is selected this happens using round robin.

IS IT FAST?

AVFS being a SAN does not influence data transfers. The total system performance is the sum of the performance of each of the storage arrays. Per client bandwidth can be as high as 20GB/s. Performance is determined by the storage array and DL which holds the file data, the network interface and the client workstation and application. The client application determines the number of files which can be queued and or are accessed in parallel.

Take a closer look at the Web Gui and the Balanced Storage Manager by clicking on the pictures here under.